![]() A simple script for launching dom0 and DVMs / appVMs in hardening modes with anti-forensic protection.

A simple script for launching dom0 and DVMs / appVMs in hardening modes with anti-forensic protection.

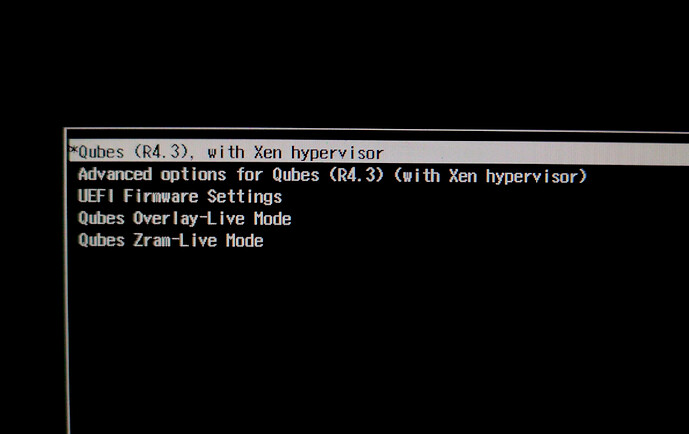

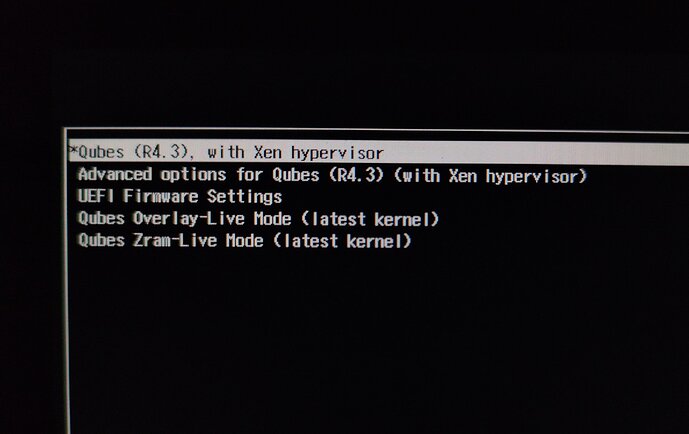

![]() This script adds two new options to the GRUB menu for safely launching live modes. You will get two ways to launch dom0 in RAM for protection against forensics:

This script adds two new options to the GRUB menu for safely launching live modes. You will get two ways to launch dom0 in RAM for protection against forensics:

- Qubes Overlay-Live Mode – this mode implements live mode in Qubes OS by creating an overlay filesystem, where the base root FS image is mounted as a read-only lower layer, and changes are stored in a volatile upper layer on tmpfs in RAM, ensuring complete amnesia upon reboot without writing to disk. Dom0 security is achieved through full isolation from persistent storage: all operations occur in memory, with bind-mounts for change access that do not touch the disk.

- Qubes Zram-Live Mode – this mode sets up live mode via zram: root FS from disk is copied into zram (compressed block device in RAM), then mounted for fully memory-based operation, followed by disk unmounting, preventing any writes or traces on the medium and protecting against persistent storage compromise.

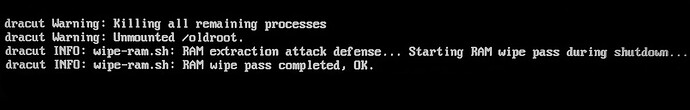

![]() This script also adds the Ram‑Wipe module to wipe memory after shutdown. This tool is used by Tails and original Kicksecure / Whonix to wipe memory for protection against cold boot attack.

This script also adds the Ram‑Wipe module to wipe memory after shutdown. This tool is used by Tails and original Kicksecure / Whonix to wipe memory for protection against cold boot attack.

You will see new entries after shutdown Qubes ![]()

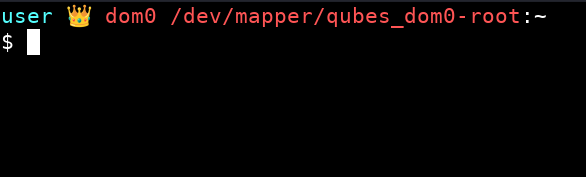

![]() This script also creates an ultra‑hardened dom0 in live modes:

This script also creates an ultra‑hardened dom0 in live modes:

- root read‑only

user@dom0:$ mount | grep /dev/mapper/qubes_dom0-root

/dev/mapper/qubes_dom0-root on /live/image type ext4 (ro,relatime,stripe=16)

- strong hardening mount (

nodev,nosuid,noatime,nodiratime,noexec,nr_inodes=500k) - strong kernel hardening (Secureblue hardening)

- grub and initramfs / dracut updates disabled

- swap disabled

- all data (dom0 logs, home-root files, metadata) is destroyed after shutdown.

It protects system from any hacker or malware attacks! And it protects dom0 from user errors during configuration or when testing new software.

![]() This script also creates two hardened Ephemeral DVMs with fully ephemeral encryption + hardened RAM DVM for testing the most dangerous software:

This script also creates two hardened Ephemeral DVMs with fully ephemeral encryption + hardened RAM DVM for testing the most dangerous software:

- In ephemeral DVMs (

ephemeral-whonix-dvm,ephemeral-dvm), your volatile volume (xvdc) with root and directories from the private volume now has ephemeral encryption! This is achieved byqvm-pool set vm-pool -o ephemeral_volatile=True, which encrypts the volatile volume with a RAM‑only key discarded on shutdown. In addition,qvm-volume config appvm:root rw Falsemakes the root volume (xvda) read‑only and mounts a temporary writable overlay whose writes are redirected to the encrypted volatile volume, so system changes outside/rwnever persist, and then directories from the private volume (xvdb) are mount in the ephemeral root. All data will be completely lost after shutdown.

ephemeral-whonix-dvmdoes not have root access by default for maximum protection against malware. forephemeral-dvmrecommended to install kicksecure-18 template for the same level of protection against root access.

Ephemeral encryption protects your data even if a forensic analyst gains access to the decrypted, running dom0!

user@dom0:$ qvm-volume info ephemeral-dvm:root

pool vm-pool

vid qubes_dom0/vm-ephemeral-dvm-root

rw False

user@disp857:/$ lsblk

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINTS

xvda 202:0 1 20G 1 disk

├─xvda1 202:1 1 200M 1 part

├─xvda2 202:2 1 2M 1 part

└─xvda3 202:3 1 19.8G 1 part

└─dmroot 253:0 0 19.8G 0 dm /rw

/usr/local

/home

/

xvdb 202:16 1 2G 0 disk

xvdc 202:32 1 10G 0 disk

├─xvdc1 202:33 1 1G 0 part [SWAP]

└─xvdc2 202:34 1 9G 0 part

└─dmroot 253:0 0 19.8G 0 dm /rw

/usr/local

/home

/

-

RAM DVM (

untrusted-ram-dvm) created in the varlibqubes pool - this pool resides entirely in dom0’s RAM and destroys all data after dom0 shutdown. This DVM doesn’t heavily load dom0 memory and is well-suited for simple testing new software with root privileges. -

New DVMs have kernel options

init_on_free=1,init_on_alloc=1(Secureblue and Tails have these kernel options by default) andxen_scrub_pages=1for paranoid security and to protect against the leakage of passwords and private data. Now no app can leak your passwords or private data -init_on_free=1/init_on_alloc=1forces DVM kernel to zero all pages when freeing! Xen hypervisorxen_scrub_pages=1then wipes guest pages, overwriting/recycling them securely. Kernel-Xen memory wipe protects your data even if a forensic analyst gains access to the decrypted, running dom0!

user@disp857:/$ cat /proc/cmdline | grep -E 'init_on_free=1|init_on_alloc=1|xen_scrub_pages=1'

... xen_scrub_pages=1 init_on_free=1 init_on_alloc=1 selinux=1 security=selinux

- New DVMs also have protection against dom0-timezone leak. It protects against the creation of a your unique system fingerprint!

user@disp857:/$ timedatectl

Local time: Thu 2026-03-04 19:19:46 UTC

Universal time: Thu 2026-03-04 19:19:46 UTC

RTC time: n/a

Time zone: Etc/UTC (UTC, +0000)

![]() This guide solves the old problems:

This guide solves the old problems:

- implement live boot by porting grub-live to Qubes - amnesia / non-persistent boot / anti-forensics

- DisposableVMs: support for in-RAM execution only (for anti-forensics

- Reduce leakage of disposable VM content and history into dom0 filesystem

- Wipe RAM after shutdown

Overlay‑Live Mode is very fast. Overlay is used in Tails and Kicksecure/Whonix. Overlayfs in tmpfs keeps most data on read-only and stores only changes in RAM, so you don’t need a full in memory copy of everything. tmpfs also grows and shrinks dynamically, so memory is used only for actual modified files and metadata, not preallocated. The best option by default.

Zram‑Live Mode starts slowly (you copy the entire disk and work with a complete dom0 copy), uses more CPU power, but saves RAM dramatically (~ 2x) if you want to run VM entirely in RAM without ephemeral encryption. This is an experimental mode for running large appVMs, StandaloneVM or TemplateVM in RAM, as well as for experimenting with dom0 in a state closer to its default configuration. This mode also works great for devices with 12-16 GB of RAM when live modes run out of memory.

Both live modes significantly increase dom0 security:

Root mount in read‑only mode,

dom0 operates in the ultra-hardened live-mode,

Ephemeral DVMs operates in volume with ephemeral encryption,

RAM-DVM operates fully in dom0 RAM,

All data is destroyed after shutdown.

RAM-wipe always and everywhere.

Now your private data are protected from forensic analysis. Ephemeral encryption protect your data even if a forensic analyst gains access to the decrypted, running dom0. Xen wipes memory for VMs, and

init_on_free=1withinit_on_alloc=1wipe memory for apps in amnesic‑live DVMs. Dom0 Live with isolation and strong hardening is securely shielded from any hacks. Even if a genius attacker gets into dom0, he won’t be able to make any persistent changes because your root is mounted read‑only, and a reboot will wipe out the attacker! You have the most powerful system protection: xen-isolation, robust hardening, a read‑only root, ephemeral encryption, non-persistent live mode with ram-wipe.

This also works great for experiments in Qubes or for beginners who want to learn without fear of breaking anything - all changes disappear after a reboot. It will extend the lifespan of your SSD.

Don’t worry about installing these modes - default Qubes boot won’t be affected at all and won’t change! I created this scenario to be as safe as possible and isolated from the default Qubes boot:

New GRUB options are added to/etc/grub.d/40_custom(so it don’t modify your/etc/default/grub).

New sysctl options start only in live modes.

New dracut live modules start only in live modes.

/boot, GRUB and initramfs/dracut updates disabled in live modes.

New ephemeral DVMs don’t affect the operation or settings of the default DVMs.

Swap disabled in live modes.

![]()

![]() Simple script for automatically creating dom0 live modes and anti-forensic DVMs

Simple script for automatically creating dom0 live modes and anti-forensic DVMs

Make a backup before you run script ![]()

You just need:

- Save script into txt, for example, with name

live.shin/home/user/in appVM. - Copy file to dom0. Run it in dom0 terminal (

qube-name- appVM with script):

qvm-run --pass-io qube-name 'cat /home/user/live.sh' > live.sh - Make file executable. Run in dom0 terminal:

sudo chmod +x live.sh

or right mouse click on scirt → Properties → Permissions → Program: → click

Run script in dom0 terminal with sudo

sudo ./live.sh

I recommend installing kicksecure-18 template before running script:

qvm-template --enablerepo qubes-templates-community install kicksecure-18

It will allow you to create an ephemeral-dvm with powerful protection and hardening.

#!/bin/bash

# Qubes Dom0 Live Boot, RAM-Wipe, Ephemeral DVMs

# ⚠️ Make backup before running! Run as root: sudo ./live.sh

set -e # Exit on any error

#BOOT_UUID

BOOT_UUID=$(findmnt -n -o UUID /boot 2>/dev/null || echo "AUTO_BOOT_NOT_FOUND")

if [ "$BOOT_UUID" = "AUTO_BOOT_NOT_FOUND" ]; then

BOOT_UUID=$(blkid -s UUID -o value -d $(findmnt -n -o SOURCE /boot 2>/dev/null))

fi

# LUKS_UUID

LUKS_DEVICE=$(blkid -t TYPE="crypto_LUKS" -o device 2>/dev/null | head -n1 || echo "")

if [ -n "$LUKS_DEVICE" ]; then

LUKS_UUID=$(sudo cryptsetup luksUUID "$LUKS_DEVICE" 2>/dev/null)

else

LUKS_UUID="AUTO_LUKS_NOT_FOUND"

fi

# Latest XEN_PATH

XEN_PATH=$(ls /boot/xen*.gz 2>/dev/null | sort -V | tail -1 | xargs basename 2>/dev/null || echo "/xen-4.19.4.gz")

# Latest kernel/initramfs

LATEST_KERNEL=$(ls /boot/vmlinuz-*qubes*.x86_64 2>/dev/null | grep -E 'qubes\.fc[0-9]+' | sort -V | tail -1 | xargs basename)

LATEST_INITRAMFS=$(echo "/initramfs-${LATEST_KERNEL#vmlinuz-}.img")

# Max memory dom0

system_total_mb=$(xl info | grep total_memory | awk '{print $3}')

if [ -n "$system_total_mb" ] && [ "$system_total_mb" -gt 0 ] 2>/dev/null; then

# 80% total_memory

DOM0_MAX_MB=$((system_total_mb * 80 / 100))

DOM0_MAX_GB=$((DOM0_MAX_MB / 1024))

DOM0_MAX_RAM="dom0_mem=max:${DOM0_MAX_MB}M"

DOM0_MAX_GBG="${DOM0_MAX_GB}G"

else

DOM0_MAX_RAM="dom0_mem=max:10240M"

DOM0_MAX_GB="10"

DOM0_MAX_GBG="10G"

fi

# qubes_dom0-root

Qubes_Root=$(findmnt -n -o SOURCE /)

echo "=== Qubes Dom0 Live Boot Setup ==="

# Disable Dom0 Swap and Add Sysctl Hardening:

cat > /home/user/.config/swapoff.sh << 'EOF'

#!/bin/bash

sleep 2

if findmnt -n -o SOURCE / | grep -qE "(overlay|/dev/zram0)"; then

sudo systemctl stop dev-zram0.swap systemd-zram-setup@zram0.service

sudo systemctl disable dev-zram0.swap systemd-zram-setup@zram0.service

sudo systemctl mask dev-zram0.swap systemd-zram-setup@zram0.service

sudo swapoff /dev/zram0

sudo swapoff -a

notify-send --expire-time=20000 "Live session is running" "dom0 mode: $(findmnt -n -o SOURCE /)" --icon=dialog-information

sudo sed -i '/[[:space:]]\+swap[[:space:]]\+/s/^/#/' /etc/fstab

sudo sed -i '/[[:space:]]\+none[[:space:]]\+swap[[:space:]]\+/s/^/#/' /etc/fstab

sudo sed -i '/[[:space:]]\?\/dev\/.*[[:space:]]\+swap[[:space:]]\+/s/^/#/' /etc/fstab

sudo sysctl -w kernel.sysrq=0

sudo sysctl -w kernel.perf_event_paranoid=3

sudo sysctl -w kernel.kptr_restrict=2

sudo sysctl -w kernel.panic=5

sudo sysctl -w fs.protected_regular=2

sudo sysctl -w fs.protected_fifos=2

sudo sysctl -w kernel.printk="3 3 3 3"

sudo sysctl -w kernel.kexec_load_disabled=1

sudo sysctl -w kernel.io_uring_disabled=2

sudo chattr +i /boot/grub2/grub.cfg

sudo chattr +i /boot

else

sudo chattr -i /boot/grub2/grub.cfg

sudo chattr -i /boot

fi

EOF

chmod 755 /home/user/.config/swapoff.sh

mkdir -p /home/user/.config/autostart/

cat > /home/user/.config/autostart/swapoff.desktop << 'EOF'

[Desktop Entry]

Encoding=UTF-8

Version=0.9.4

Type=Application

Name=swapoff

Comment=

Exec=/home/user/.config/swapoff.sh

OnlyShowIn=XFCE;

RunHook=0

StartupNotify=false

Terminal=false

Hidden=false

EOF

# Create Dracut directories

echo "Creating Dracut modules..."

mkdir -p /usr/lib/dracut/modules.d/90ramboot

mkdir -p /usr/lib/dracut/modules.d/90overlayfs-root

# Create 90ramboot/module-setup.sh

cat > /usr/lib/dracut/modules.d/90ramboot/module-setup.sh << 'EOF'

#!/usr/bin/bash

check() {

return 0

}

depends() {

return 0

}

install() {

inst_simple "$moddir/zram-mount.sh"

inst_hook cleanup 00 "$moddir/zram-mount.sh"

}

EOF

chmod 755 /usr/lib/dracut/modules.d/90ramboot/module-setup.sh

# Create 90overlayfs-root/module-setup.sh

cat > /usr/lib/dracut/modules.d/90overlayfs-root/module-setup.sh << 'EOF'

#!/bin/bash

check() {

[ -d /lib/modules/$kernel/kernel/fs/overlayfs ] || return 1

}

depends() {

return 0

}

installkernel() {

hostonly='' instmods overlay

}

install() {

inst_hook pre-pivot 10 "$moddir/overlay-mount.sh"

}

EOF

chmod 755 /usr/lib/dracut/modules.d/90overlayfs-root/module-setup.sh

# Create overlay-mount.sh

cat > /usr/lib/dracut/modules.d/90overlayfs-root/overlay-mount.sh << 'EOF'

#!/bin/sh

. /lib/dracut-lib.sh

if ! getargbool 0 rootovl ; then

return

fi

modprobe overlay

mount -o remount,nolock,noatime $NEWROOT

mkdir -p /live/image

mount --bind $NEWROOT /live/image

umount $NEWROOT

mkdir /cow

mount -n -t tmpfs -o mode=0755,size=100%,nr_inodes=500k,noexec,nodev,nosuid,noatime,nodiratime tmpfs /cow

mkdir /cow/work /cow/rw

mount -t overlay -o noatime,nodiratime,volatile,lowerdir=/live/image,upperdir=/cow/rw,workdir=/cow/work,default_permissions,relatime overlay $NEWROOT

mkdir -p $NEWROOT/live/cow

mkdir -p $NEWROOT/live/image

mount --bind /cow/rw $NEWROOT/live/cow

umount /cow

mount --bind /live/image $NEWROOT/live/image

umount /live/image

umount $NEWROOT/live/cow

EOF

chmod 755 /usr/lib/dracut/modules.d/90overlayfs-root/overlay-mount.sh

# Create zram-mount.sh

cat > /usr/lib/dracut/modules.d/90ramboot/zram-mount.sh << EOF

#!/bin/sh

. /lib/dracut-lib.sh

if ! getargbool 0 rootzram ; then

return

fi

mkdir /mnt

umount /sysroot

mount -o ro $Qubes_Root /mnt

modprobe zram

echo $DOM0_MAX_GBG > /sys/block/zram0/disksize

/mnt/usr/sbin/mkfs.ext2 /dev/zram0

mount -o nodev,nosuid,noatime,nodiratime /dev/zram0 /sysroot

cp -a /mnt/* /sysroot

umount /mnt

exit 0

EOF

chmod 755 /usr/lib/dracut/modules.d/90ramboot/zram-mount.sh

# Create ramboot dracut.conf

cat > /etc/dracut.conf.d/ramboot.conf << 'EOF'

add_drivers+=" zram "

add_dracutmodules+=" ramboot "

EOF

# Create module directory

mkdir -p /usr/lib/dracut/modules.d/40ram-wipe/

# Create module-setup.sh

cat > /usr/lib/dracut/modules.d/40ram-wipe/module-setup.sh << 'EOF'

#!/bin/bash

# -*- mode: shell-script; indent-tabs-mode: nil; sh-basic-offset: 4; -*-

# ex: ts=8 sw=4 sts=4 et filetype=sh

## Copyright (C) 2023 - 2025 ENCRYPTED SUPPORT LLC <adrelanos@whonix.org>

## See the file COPYING for copying conditions.

# called by dracut

check() {

require_binaries sync || return 1

require_binaries sleep || return 1

require_binaries dmsetup || return 1

return 0

}

# called by dracut

depends() {

return 0

}

# called by dracut

install() {

inst_simple "/usr/libexec/ram-wipe/ram-wipe-lib.sh" "/lib/ram-wipe-lib.sh"

inst_multiple sync

inst_multiple sleep

inst_multiple dmsetup

inst_hook shutdown 40 "$moddir/wipe-ram.sh"

inst_hook cleanup 80 "$moddir/wipe-ram-needshutdown.sh"

}

# called by dracut

installkernel() {

return 0

}

EOF

chmod +x /usr/lib/dracut/modules.d/40ram-wipe/module-setup.sh

# Create wipe-ram-needshutdown.sh

cat > /usr/lib/dracut/modules.d/40ram-wipe/wipe-ram-needshutdown.sh << 'EOF'

#!/bin/sh

## Copyright (C) 2023 - 2025 ENCRYPTED SUPPORT LLC <adrelanos@whonix.org>

## See the file COPYING for copying conditions.

type getarg >/dev/null 2>&1 || . /lib/dracut-lib.sh

. /lib/ram-wipe-lib.sh

ram_wipe_check_needshutdown() {

## 'local' is unavailable in 'sh'.

#local kernel_wiperam_setting

kernel_wiperam_setting="$(getarg wiperam)"

if [ "$kernel_wiperam_setting" = "skip" ]; then

force_echo "wipe-ram-needshutdown.sh: Skip, because wiperam=skip kernel parameter detected, OK."

return 0

fi

true "wipe-ram-needshutdown.sh: Calling dracut function need_shutdown to drop back into initramfs at shutdown, OK."

need_shutdown

return 0

}

ram_wipe_check_needshutdown

EOF

chmod +x /usr/lib/dracut/modules.d/40ram-wipe/wipe-ram-needshutdown.sh

# Create wipe-ram.sh

cat > /usr/lib/dracut/modules.d/40ram-wipe/wipe-ram.sh << 'EOF'

#!/bin/sh

## Copyright (C) 2023 - 2025 ENCRYPTED SUPPORT LLC <adrelanos@whonix.org>

## See the file COPYING for copying conditions.

## Credits:

## First version by @friedy10.

## https://github.com/friedy10/dracut/blob/master/modules.d/40sdmem/wipe.sh

## Use '.' and not 'source' in 'sh'.

. /lib/ram-wipe-lib.sh

drop_caches() {

sync

## https://gitlab.tails.boum.org/tails/tails/-/blob/master/config/chroot_local-includes/usr/local/lib/initramfs-pre-shutdown-hook

### Ensure any remaining disk cache is erased by Linux' memory poisoning

echo 3 > /proc/sys/vm/drop_caches

sync

}

ram_wipe() {

## 'local' is unavailable in 'sh'.

#local kernel_wiperam_setting dmsetup_actual_output dmsetup_expected_output

## getarg returns the last parameter only.

kernel_wiperam_setting="$(getarg wiperam)"

if [ "$kernel_wiperam_setting" = "skip" ]; then

force_echo "wipe-ram.sh: Skip, because wiperam=skip kernel parameter detected, OK."

return 0

fi

force_echo "wipe-ram.sh: RAM extraction attack defense... Starting RAM wipe pass during shutdown..."

drop_caches

force_echo "wipe-ram.sh: RAM wipe pass completed, OK."

}

ram_wipe

EOF

chmod +x /usr/lib/dracut/modules.d/40ram-wipe/wipe-ram.sh

# Create ram-wipe dracut.conf.d

cat > /usr/lib/dracut/dracut.conf.d/30-ram-wipe.conf << 'EOF'

add_dracutmodules+=" ram-wipe "

EOF

# Create ram-wipe-lib.sh

mkdir -p /usr/libexec/ram-wipe

cat > /usr/libexec/ram-wipe/ram-wipe-lib.sh << 'EOF'

#!/bin/sh

## Copyright (C) 2023 - 2025 ENCRYPTED SUPPORT LLC <adrelanos@whonix.org>

## See the file COPYING for copying conditions.

## Based on:

## /usr/lib/dracut/modules.d/99base/dracut-lib.sh

if [ -z "$DRACUT_SYSTEMD" ]; then

force_echo() {

echo "<28>dracut INFO: $*" > /dev/kmsg

echo "dracut INFO: $*" >&2

}

else

force_echo() {

echo "INFO: $*" >&2

}

fi

EOF

chmod +x /usr/libexec/ram-wipe/ram-wipe-lib.sh

# Update INITRAMFS

dracut --verbose --force

# Create GRUB custom

echo "Creating GRUB custom ..."

cat > /etc/grub.d/40_custom << EOF

#!/usr/bin/sh

exec tail -n +3 \$0

menuentry 'Qubes Overlay-Live Mode' --class qubes --class gnu-linux --class gnu --class os --class xen \$menuentry_id_option 'xen-gnulinux-simple-/dev/mapper/qubes_dom0-root' {

insmod part_gpt

insmod ext2

search --no-floppy --fs-uuid --set=root $BOOT_UUID

echo 'Loading Xen ...'

if [ "\$grub_platform" = "pc" -o "\$grub_platform" = "" ]; then

xen_rm_opts=

else

xen_rm_opts="no-real-mode edd=off"

fi

insmod multiboot2

multiboot2 /$XEN_PATH placeholder console=none dom0_mem=min:1024M $DOM0_MAX_RAM ucode=scan smt=off gnttab_max_frames=2048 gnttab_max_maptrack_frames=4096 \${xen_rm_opts}

echo 'Loading Linux $LATEST_KERNEL ...'

module2 /$LATEST_KERNEL placeholder root=/dev/mapper/qubes_dom0-root ro rd.luks.uuid=$LUKS_UUID rd.lvm.lv=qubes_dom0/root rd.lvm.lv=qubes_dom0/swap plymouth.ignore-serial-consoles rhgb rootovl quiet module.sig_enforce=1 bootscrub=on usbcore.authorized_default=0

echo 'Loading initial ramdisk ...'

insmod multiboot2

module2 --nounzip $LATEST_INITRAMFS

}

menuentry 'Qubes Zram-Live Mode' --class qubes --class gnu-linux --class gnu --class os --class xen \$menuentry_id_option 'xen-gnulinux-simple-/dev/mapper/qubes_dom0-root' {

insmod part_gpt

insmod ext2

search --no-floppy --fs-uuid --set=root $BOOT_UUID

echo 'Loading Xen ...'

if [ "\$grub_platform" = "pc" -o "\$grub_platform" = "" ]; then

xen_rm_opts=

else

xen_rm_opts="no-real-mode edd=off"

fi

insmod multiboot2

multiboot2 /$XEN_PATH placeholder console=none dom0_mem=min:1024M $DOM0_MAX_RAM ucode=scan smt=off gnttab_max_frames=2048 gnttab_max_maptrack_frames=4096 \${xen_rm_opts}

echo 'Loading Linux $LATEST_KERNEL ...'

module2 /$LATEST_KERNEL placeholder root=/dev/mapper/qubes_dom0-root ro rd.luks.uuid=$LUKS_UUID rd.lvm.lv=qubes_dom0/root rd.lvm.lv=qubes_dom0/swap plymouth.ignore-serial-consoles rhgb rootzram quiet module.sig_enforce=1 bootscrub=on usbcore.authorized_default=0

echo 'Loading initial ramdisk ...'

insmod multiboot2

module2 --nounzip $LATEST_INITRAMFS

}

EOF

chmod 755 /etc/grub.d/40_custom

# Update GRUB

grub2-mkconfig -o /boot/grub2/grub.cfg

# Creating ephemeral-dvms with untrusted-ram-dvm support (FIXED)

check_prerequisites() {

local default_dispvm=$(qubes-prefs default_dispvm 2>/dev/null || echo "")

local whonix_dvm="whonix-workstation-18-dvm"

if [ -z "$default_dispvm" ] || ! qvm-ls "$default_dispvm" >/dev/null 2>&1 || ! qvm-ls "$whonix_dvm" >/dev/null 2>&1; then

echo "Missing default_dispvm ($(qubes-prefs default_dispvm 2>/dev/null || echo "not set")) or $whonix_dvm. Exiting."

echo "ephemeral-dvms were not created"

echo "dom0 live modes were successfully created"

exit 1

fi

echo "Prerequisites OK: default_dispvm=$default_dispvm, $whonix_dvm"

}

ephemeral_exist() {

qvm-ls ephemeral-dvm >/dev/null 2>&1 && qvm-ls ephemeral-whonix-dvm >/dev/null 2>&1

}

untrusted_ram_exist() {

qvm-ls untrusted-ram-dvm >/dev/null 2>&1

}

vm_exists() {

qvm-ls "$1" >/dev/null 2>&1

}

get_default_dispvm() {

qubes-prefs default_dispvm 2>/dev/null || echo ""

}

main() {

check_prerequisites

local default_dispvm=$(get_default_dispvm)

echo "Using default_dispvm: $default_dispvm"

echo "Checking existing ephemeral VMs..."

if ephemeral_exist; then

echo "✓ ephemeral-dvm and ephemeral-whonix-dvm already exist, skipping creation"

else

echo "Creating missing ephemeral VMs..."

# Создание ephemeral-dvm

if ! vm_exists ephemeral-dvm; then

echo " Creating ephemeral-dvm..."

if qvm-ls kicksecure-18 >/dev/null 2>&1; then

echo " Using kicksecure-18 template..."

qvm-create --class AppVM --label purple --template kicksecure-18 ephemeral-dvm

if vm_exists ephemeral-dvm; then

qvm-prefs ephemeral-dvm template_for_dispvms True

qvm-features ephemeral-dvm appmenus-dispvm 1

qvm-appmenus --update ephemeral-dvm || echo " Warning: appmenus update failed"

qvm-run -u root ephemeral-dvm "cat > /rw/config/rc.local << 'EOL'

#!/bin/sh

timedatectl set-timezone Etc/UTC

for i in {1..15}; do

if [ -b /dev/xvdc ] && mountpoint -q /volatile 2>/dev/null; then

break

fi

done

if ! mountpoint -q /volatile 2>/dev/null; then

mount /dev/xvdc /volatile || echo \"Volatile mount failed\"

fi

remount_dir() {

local dir=\"\$1\"

local volatile_dir=\"/volatile\$dir\"

mkdir -p \"\$volatile_dir\"

[ -z \"\$(ls -A \"\$volatile_dir\" 2>/dev/null)\" ] && cp -a \"/rw\$dir/.\" \"\$volatile_dir/\" 2>/dev/null || true

umount -l \"\$dir\" 2>/dev/null || true

mount --bind \"\$volatile_dir\" \"\$dir\" || echo \"Bind \$dir failed\"

}

mkdir -p /volatile/home

cp -a /rw/home/. /volatile/home/ 2>/dev/null || true

umount -l /home 2>/dev/null || true

mount --bind /volatile/home /home

remount_dir \"/var/spool/cron\"

remount_dir \"/usr/local\"

remount_dir \"/var/lib/systemcheck\"

remount_dir \"/var/lib/canary\"

remount_dir \"/var/cache/setup-dist\"

remount_dir \"/var/lib/sdwdate\"

remount_dir \"/var/lib/dummy-dependency\"

remount_dir \"/var/cache/anon-base-files\"

remount_dir \"/var/lib/whonix\"

USER_NAME=\"user\"

USER_HOME=\"/home/\$USER_NAME\"

LOCAL_APP_DIR=\"\$USER_HOME/.local/share/applications\"

SYSTEM_DESKTOP=\"/usr/share/applications/pcmanfm-qt.desktop\"

USER_DESKTOP=\"\$LOCAL_APP_DIR/pcmanfm-qt.desktop\"

mkdir -p \"\$LOCAL_APP_DIR\"

if [ -r \"\$SYSTEM_DESKTOP\" ]; then

if [ ! -e \"\$USER_DESKTOP\" ]; then

cp \"\$SYSTEM_DESKTOP\" \"\$USER_DESKTOP\"

fi

sed -i \"s|^Exec=.*|Exec=pcmanfm-qt \$USER_HOME|\" \"\$USER_DESKTOP\"

fi

QTERMINAL_USER=\"\$LOCAL_APP_DIR/qterminal.desktop\"

if [ -r \"/usr/share/applications/qterminal.desktop\" ]; then

cp \"/usr/share/applications/qterminal.desktop\" \"\$QTERMINAL_USER\"

sed -i \"0,/^Exec=/s|^Exec=.*|Exec=bash -c 'cd /home/user \&\& exec qterminal'|\" \"\$QTERMINAL_USER\"

fi

sleep 60

remount_dir \"/rw\"

EOL

chmod +x /rw/config/rc.local" || echo " Warning: rc.local setup failed"

echo " ✓ ephemeral-dvm created from kicksecure-18 with rc.local"

else

echo " ERROR: Failed to create ephemeral-dvm from kicksecure-18"

fi

else

echo " kicksecure-18 not found, using default_dispvm clone ($default_dispvm)..."

if vm_exists "$default_dispvm" && ! vm_exists ephemeral-dvm; then

qvm-clone -P varlibqubes "$default_dispvm" ephemeral-dvm

if vm_exists ephemeral-dvm; then

qvm-prefs ephemeral-dvm label purple

qvm-prefs ephemeral-dvm template_for_dispvms True

qvm-features ephemeral-dvm appmenus-dispvm 1

# НЕТ qvm-appmenus --update для clone!

qvm-run -u root ephemeral-dvm "cat > /rw/config/rc.local << 'EOL'

#!/bin/sh

timedatectl set-timezone Etc/UTC

mount /dev/xvdc /volatile 2>/dev/null || true

remount_dir() {

local dir=\$1

local volatile_dir=/volatile\$dir

mkdir -p \$volatile_dir

[ -z \"\$(ls -A \$volatile_dir 2>/dev/null)\" ] && cp -a \"/rw\$dir/.\" \$volatile_dir/ 2>/dev/null || true

umount -l \$dir 2>/dev/null || true

mount --bind \$volatile_dir \$dir || true

}

mkdir -p /volatile/home

cp -a /rw/home/. /volatile/home/ 2>/dev/null || true

umount -l /home 2>/dev/null || true

mount --bind /volatile/home /home

remount_dir /var/spool/cron

remount_dir /usr/local

sleep 60

remount_dir /rw

EOL

chmod +x /rw/config/rc.local" || echo " Warning: rc.local setup failed"

echo " ✓ ephemeral-dvm created from $default_dispvm with rc.local"

else

echo " ERROR: Failed to clone $default_dispvm -> ephemeral-dvm"

fi

else

echo " WARNING: Cannot create ephemeral-dvm (default_dispvm not available)"

fi

fi

fi

# Создание ephemeral-whonix-dvm

if ! vm_exists ephemeral-whonix-dvm; then

echo " Creating ephemeral-whonix-dvm..."

if vm_exists whonix-workstation-18-dvm && ! vm_exists ephemeral-whonix-dvm; then

qvm-clone whonix-workstation-18-dvm ephemeral-whonix-dvm

if vm_exists ephemeral-whonix-dvm; then

qvm-prefs ephemeral-whonix-dvm label purple

qvm-run -u root ephemeral-whonix-dvm "cat > /rw/config/rc.local << 'EOL'

#!/bin/sh

for i in {1..15}; do

if [ -b /dev/xvdc ] && mountpoint -q /volatile 2>/dev/null; then

break

fi

done

if ! mountpoint -q /volatile 2>/dev/null; then

mount /dev/xvdc /volatile || echo \"Volatile mount failed\"

fi

remount_dir() {

local dir=\"\$1\"

local volatile_dir=\"/volatile\$dir\"

mkdir -p \"\$volatile_dir\"

[ -z \"\$(ls -A \"\$volatile_dir\" 2>/dev/null)\" ] && cp -a \"/rw\$dir/.\" \"\$volatile_dir/\" 2>/dev/null || true

umount -l \"\$dir\" 2>/dev/null || true

mount --bind \"\$volatile_dir\" \"\$dir\" || echo \"Bind \$dir failed\"

}

mkdir -p /volatile/home

cp -a /rw/home/. /volatile/home/ 2>/dev/null || true

umount -l /home 2>/dev/null || true

mount --bind /volatile/home /home

remount_dir \"/var/spool/cron\"

remount_dir \"/usr/local\"

remount_dir \"/var/lib/systemcheck\"

remount_dir \"/var/lib/canary\"

remount_dir \"/var/cache/setup-dist\"

remount_dir \"/var/lib/sdwdate\"

remount_dir \"/var/lib/dummy-dependency\"

remount_dir \"/var/cache/anon-base-files\"

remount_dir \"/var/lib/whonix\"

USER_NAME=\"user\"

USER_HOME=\"/home/\$USER_NAME\"

LOCAL_APP_DIR=\"\$USER_HOME/.local/share/applications\"

SYSTEM_DESKTOP=\"/usr/share/applications/pcmanfm-qt.desktop\"

USER_DESKTOP=\"\$LOCAL_APP_DIR/pcmanfm-qt.desktop\"

mkdir -p \"\$LOCAL_APP_DIR\"

if [ -r \"\$SYSTEM_DESKTOP\" ]; then

if [ ! -e \"\$USER_DESKTOP\" ]; then

cp \"\$SYSTEM_DESKTOP\" \"\$USER_DESKTOP\"

fi

sed -i \"s|^Exec=.*|Exec=pcmanfm-qt \$USER_HOME|\" \"\$USER_DESKTOP\"

fi

QTERMINAL_USER=\"\$LOCAL_APP_DIR/qterminal.desktop\"

if [ -r \"/usr/share/applications/qterminal.desktop\" ]; then

cp \"/usr/share/applications/qterminal.desktop\" \"\$QTERMINAL_USER\"

sed -i \"0,/^Exec=/s|^Exec=.*|Exec=bash -c 'cd /home/user \&\& exec qterminal'|\" \"\$QTERMINAL_USER\"

fi

sleep 60

remount_dir \"/rw\"

EOL

chmod +x /rw/config/rc.local" || echo " Warning: rc.local setup failed"

echo " ✓ ephemeral-whonix-dvm created with rc.local"

else

echo " ERROR: Failed to clone whonix-workstation-18-dvm -> ephemeral-whonix-dvm"

fi

else

echo " WARNING: Cannot create ephemeral-whonix-dvm"

fi

fi

fi

# Создание untrusted-ram-dvm

echo "Checking untrusted-ram-dvm..."

if ! untrusted_ram_exist; then

echo " Creating untrusted-ram-dvm from $default_dispvm..."

if vm_exists "$default_dispvm"; then

qvm-clone -P varlibqubes "$default_dispvm" untrusted-ram-dvm

if vm_exists untrusted-ram-dvm; then

qvm-prefs untrusted-ram-dvm label purple

echo " ✓ untrusted-ram-dvm created from $default_dispvm"

else

echo " ERROR: Failed to clone $default_dispvm -> untrusted-ram-dvm"

fi

else

echo " ERROR: $default_dispvm not available"

fi

else

echo "✓ untrusted-ram-dvm already exists, skipping creation"

fi

echo "Setting up systemd rw.service..."

if [ ! -f /etc/systemd/system/rw.service ]; then

cat > /etc/systemd/system/rw.service << 'EOF'

[Unit]

Description=root rw False

After=qubesd.service

Requires=qubesd.service

[Service]

Type=oneshot

RemainAfterExit=yes

ExecStart=/usr/bin/qvm-pool set varlibqubes -o ephemeral_volatile=True

ExecStart=/usr/bin/qvm-pool set vm-pool -o ephemeral_volatile=True

ExecStart=/usr/bin/qvm-volume config ephemeral-dvm:root rw False

ExecStart=/usr/bin/qvm-volume config ephemeral-whonix-dvm:root rw False

ExecStart=/usr/bin/qvm-volume config untrusted-ram-dvm:root rw False

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable rw.service

echo "✓ rw.service created and enabled"

else

echo "✓ rw.service already exists"

fi

echo "Shutting down VMs if running..."

qvm-shutdown --wait ephemeral-dvm 2>/dev/null || true

qvm-shutdown --wait ephemeral-whonix-dvm 2>/dev/null || true

echo "Configuring storage pools..."

qvm-pool set vm-pool ephemeral_volatile True 2>/dev/null || true

qvm-pool set varlibqubes ephemeral_volatile True 2>/dev/null || true

echo "✓ Storage pools configured for ephemeral mode"

echo "Setting kernel security options..."

if vm_exists ephemeral-dvm; then

qvm-prefs ephemeral-dvm kernelopts "xen_scrub_pages=1 init_on_free=1 init_on_alloc=1" 2>/dev/null || true

fi

if vm_exists ephemeral-whonix-dvm; then

qvm-prefs ephemeral-whonix-dvm kernelopts "xen_scrub_pages=1 init_on_free=1 init_on_alloc=1" 2>/dev/null || true

fi

if vm_exists untrusted-ram-dvm; then

qvm-prefs untrusted-ram-dvm kernelopts "xen_scrub_pages=1 init_on_free=1 init_on_alloc=1" 2>/dev/null || true

fi

echo "✓ Kernel options applied"

echo "Configuring root volumes as read-write false..."

if vm_exists ephemeral-dvm; then

qvm-volume config ephemeral-dvm:root rw False 2>/dev/null || true

fi

if vm_exists ephemeral-whonix-dvm; then

qvm-volume config ephemeral-whonix-dvm:root rw False 2>/dev/null || true

fi

if vm_exists untrusted-ram-dvm; then

qvm-volume config untrusted-ram-dvm:root rw False 2>/dev/null || true

qvm-features untrusted-ram-dvm anon-timezone 1 2>/dev/null || true

fi

echo "✓ Root volumes configured"

echo "Done"

}

main

echo

echo "Done!"

echo "✓ ALL STEPS COMPLETED SUCCESSFULLY!"

![]() Done!

Done!

Restart Qubes OS and Test Qubes live modes ![]()

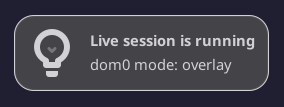

You will see this notification on the desktop when launching live mode:

Next, please review the rules and guidelines for using live modes ![]()

||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

![]()

![]()

![]()

![]()

![]()

![]()

![]()

![]()

![]()

![]() NEW EPHEMERAL APPVMS / DVMS

NEW EPHEMERAL APPVMS / DVMS

If you want to create new ephemeral appVMs / DVMs:

-

simply copy ephemeral DVM,

-

then run this command in dom0 terminal (DVM_name - name of your new DVM after cloning)

qvm-volume config DVM_name:root rw False -

then add new DVM to rw.service:

sudo nano /etc/systemd/system/rw.service

and addExecStart=/usr/bin/qvm-volume config DVM_name:root rw Falsein the end of[Service]and clickCTRL + OandCTRL + X -

You can also add these kernel options for paranoid protection against forensics in your new ephemeral-appVMs (it will increase appVM CPU load by 5-15%).

Run in dom0 terminal:

qvm-prefs DVM_name kernelopts "xen_scrub_pages=1 init_on_free=1 init_on_alloc=1" -

if you want to change new DVM to appVM: open Qube Manager → settings of the new DVM → Advanced, and uncheck “Disposable template” option.

If you want to make your old appVMs ephemeraly encrypted, follow this guide

![]() NEW RAM APPVMS / DVMS in varlibqubes pool

NEW RAM APPVMS / DVMS in varlibqubes pool

Also you can launch VMs in varlibqubes pool (pool in dom0). But it will significantly increase RAM load on dom0 and your device. AppVM in the varlibqubes pool loses discard (TRIM) option because varlibqubes pool uses an outdated driver, therefore, size of appVM will only increase. Also, you won’t be able to customize RAM AppVM in varlibqubes in live dom0 (only in dom0’s persistent mode).

But this great option for Windows and BSD appVMs / standaloneVMs. And also this great option for testing new root software with anti-forensic.

To run appVM in varlibqubes pool:

First, if you want to launch a large VM (standaloneVM, templateVM or large appVM), Increase the dom0 size to exceed the size of VMs in the varlibqubes pool, otherwise system may freeze due to insufficient disk space:

(for example, up to 30GB and +10 GB for root-pool - but also, you should have 30 GB RAM on device and dom0_mem=max:30720M in /etc/default/grub)

sudo lvresize --size 30G /dev/mapper/qubes_dom0-root

sudo resize2fs /dev/mapper/qubes_dom0-root

sudo lvresize -L +10G qubes_dom0/root-pool

sudo nano /etc/default/grub

#change dom0_mem=max: -> dom0_mem=max:30720M

#click CTRL + O and CTRL + X

then update GRUB

sudo grub2-mkconfig -o /boot/grub2/grub.cfg

Then, in Qube Manager click clone qube and in Advanced select varlibqubes in Storage pool. Or create a new appVM, and select varlibqubes Storage pool in the Advanced Options.

(If you just want to add another small dvm template or a small appVM to varlibqubes pool, then execute only the last step with cloning)

![]()

![]()

Useful tips and rules:

If dom0 updates kernels or you add more ram to device, just run script in persistent default dom0 mode again and live-grub config update kernel and ram-settings. Re-running script won’t break anything.

You have 1 minute after launching the ephemeral AppVM to customize

/rw. Then/rwwill be remounted to the volatile volume and you won’t be able to make changes - fully ephemeral!

If you want more time to edit/rwor to keep/rwon the private volume, modify these lines in/rw/config/rc.local:

sleep 60

remount_dir "/rw"

Don’t rename ephemeral DVMs! Otherwise, DVMs will lose ephemeral encryption. If you renamed ephemeral DVMs, also change names in this file

/etc/systemd/system/rw.service

To customize ephemeral appVM: run DVM, copy file

/rw/config/rc.localand save as/rw/config/rc.local-copy, then remove code from/rw/config/rc.localand reboot DVM, make your changes, add code back from/rw/config/rc.local-copyto/rw/config/rc.localand reboot DVM again.

If you need add new shortcuts in qubes app-menu - do it in dom0 default persistent mode.

Max memory in zram mode must exceed the size of dom0 on disk. Otherwise, zram0 mode will fail to start due to insufficient disk space! This error may occur after a dom0 update, as updates increase the size of dom0 on the disk.

You can update templates in live modes. But update dom0 in persistent mode (default boot)!

You can make backups of all VMs (and dom0) in live modes.

If you want to add your custom kernel options for dom0 live modes, do it in dom0 persistent mode:

sudo nano /etc/grub.d/40_custom

and then update GRUB

sudo grub2-mkconfig -o /boot/grub2/grub.cfg

If you want to mask default Qubes boot into overlay mode, follow this simple guide

You can use ephemeral DVMs in default persistent dom0 boot. It will also provide strong forensic protection, but dom0 will retain metadata about ephemeral DVM launch. See this guide if you don’t want to install dom0 live modes and you only need ephemeral encrypted appVMs / DVMs in default persistent dom0. appVMs / DVMs in varlibqubes pool do not have anti-forensic protection in the default persistent dom0!

![]()

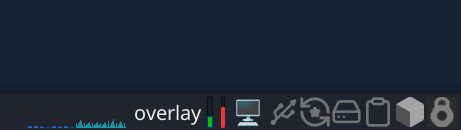

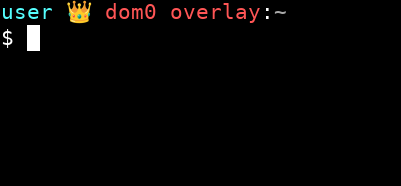

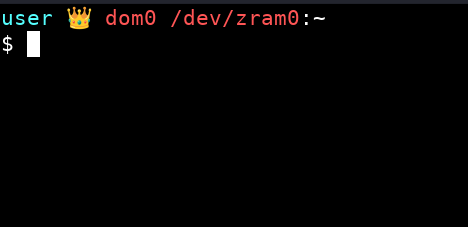

Checking dom0 mode

You can add a “Generic Monitor” widget to the XFCE panel and configure it to run command findmnt -n -o SOURCE /. This widget will display which mode you’re currently in:

You can also use this terminal theme so can see which mode you’re currently in:

Click CTRL + H in thunar of dom0 and add this code into .bashrc instead of the default code:

# .bashrc

# Source global definitions

if [ -f /etc/bashrc ]; then

. /etc/bashrc

fi

# User specific environment

if ! [[ "$PATH" =~ "$HOME/.local/bin:$HOME/bin:" ]]

then

PATH="$HOME/.local/bin:$HOME/bin:$PATH"

fi

export PATH

###########################

export VIRTUAL_ENV_DISABLE_PROMPT=true

__qubes_update_prompt_data() {

local RETVAL=$?

__qubes_venv=''

[[ -n "$VIRTUAL_ENV" ]] && __qubes_venv=$(basename "$VIRTUAL_ENV")

__qubes_git=''

__qubes_git_color=$(tput setaf 10) # clean

local git_branch=$(git --no-optional-locks rev-parse --abbrev-ref HEAD 2> /dev/null)

if [[ -n "$git_branch" ]]; then

local git_status=$(git --no-optional-locks status --porcelain 2> /dev/null | tail -n 1)

[[ -n "$git_status" ]] && __qubes_git_color=$(tput setaf 11) # dirty

__qubes_git="‹${git_branch}›"

fi

__qubes_prompt_symbol_color=$(tput sgr0)

[[ "$RETVAL" -ne 0 ]] && __qubes_prompt_symbol_color=$(tput setaf 1)

return $RETVAL # to preserve retcode

}

if [[ -n "$git_branch" ]]; then

PROMPT_COMMAND="$PROMPT_COMMAND; __qubes_update_prompt_data"

else

PROMPT_COMMAND="__qubes_update_prompt_data"

fi

PS1=''

PS1+='\[$(tput setaf 7)\]$(echo -ne $__qubes_venv)\[$(tput sgr0)\]'

PS1+='\[$(tput setaf 14)\]\u'

PS1+='\[$(tput setaf 15)\] 👑 '

PS1+='\[$(tput setaf 9)\]\h'

PS1+=" $(findmnt -n -o SOURCE /)"

PS1+='\[$(tput setaf 15)\]:'

PS1+='\[$(tput setaf 7)\]\w '

PS1+='\[$(echo -ne $__qubes_git_color)\]$(echo -ne $__qubes_git)\[$(tput sgr0)\] '

PS1+='\[$(tput setaf 8)\]\[$([[ -n "$QUBES_THEME_SHOW_TIME" ]] && echo -n "[\t]")\]\[$(tput sgr0)\]'

PS1+='\[$(tput sgr0)\]\n'

PS1+='\[$(echo -ne $__qubes_prompt_symbol_color)\]\$\[$(tput sgr0)\] '

Also see these guides for additional hardening of live modes:

- Anonymize hostname hardened template automatic installation of browser

- USB Kill Switch for Qubes OS

- Installation of Amnezia VPN and Amnezia WG: effective tools against internet blocks via DPI for China, Russia, Belarus, Turkmenistan, Iran. VPN with Vless XRay reality. Best obfuscation for WireGuard. Easy self‑hosted VPN. Bypass

- Apparmor profile for Qubes available!

ps: Do not use script from second comment - it’s outdated, and I can no longer edit this message. I flagged this comment to remove.