Background

I recently had my SSD die and go into read-only mode. Thankfully, I was able to retrieve all of my data, but it turns out that the biggest problem was my primary template. All of the reads from that template wore out the part of the disk where it was stored, and when the template’s EXT4 partition became corrupted, all VMs using that template failed to boot.

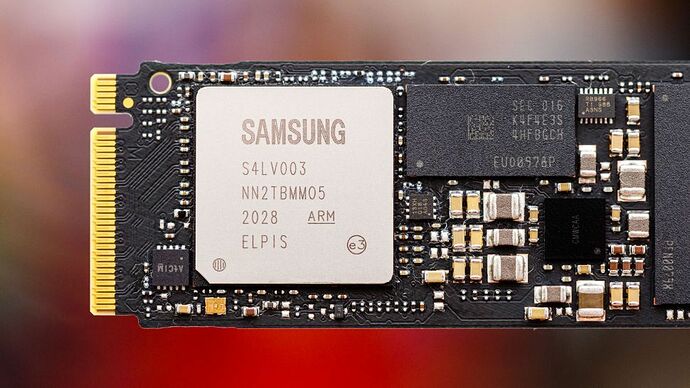

Modern SSDs use TLC (despite Samsung calling their new models 3-bit MLC, which as an article on Ars Technica described, that’s a lot like calling a red car “pink” - it’s misleading marking jargon). TLC is not as robust as MLC, but MLC drives are increasingly rare and expensive.

Best Practices for Storage Devices

Given the unique architecture of Qubes, I wondered what others out there are doing to improve performance and endurance of their drives. I’m considering using a SSD + HDD to take advantage of the performance of a SSD and the reliability of a HDD (maybe even use a virtual RAM disk for some things). However, setting up partition schemes to work with Qubes and LVM storage to take advantage of the two different devices in the best way possible is a bit complicated. On a normal Linux distro, I would put /var on the HDD and everything else on my SSD, but since each VM has its own /var inside the LVM, things get complicated.

Also, there are partition formats that are designed for wear-leveling of flash-based devices like YAFFS2 and UBIFS (both considered successors to JFFS2; LogFS has been removed from the kernel because it is unmaintained). A lot of comments claim that flash controllers implement wear-leveling, but I haven’t seen anything official to support the claim that YAFFS2 is unnecessary.

Suggestions from the Community

I wanted to see if anyone out there has recommendations for using a SSD + HDD (+ RAM disk) setup on Qubes, how they set up their LVM, what partition formats they use, etc. Once we come up with a consensus of sorts, perhaps we can put together a community guide for best practices.

Thanks!