I have a read/write corruption issue with an NVMe drive passed to a HVM either via qvm-block or as PCI passthough. I don’t have issues when I attach the device to a regular template based VM.

Qubes: 4.2.4

The hardware:

Asus TUF Gaming B550-Plus - Mother board

AMD Ryzen 7 5700G - CPU

AMD RX 9060 XT 16 GB - Graphics card 1

Asus nVidia RTX 3050 - Graphics card 2

2 x PCIe USB

WD Black SN770 2TB NVMe drive - My main drive I have had no issues at all

Crucial E100 480GB PCIe Gen4 NVMe M.2 SSD - The problem drive

The drive is connected to a secondary slot on the motherboard, which shares the bandwidth with the SATA interface. On that interface I have 8 TB regular HDD and small 256 GB SSD drive.

When the drive is attached to a HVM and I copy a large amount of data I can replicate the issue every time with a 20GB directory copy. The copy starts and continues for a little while, then stops. The journalctl in the HVM shows errors. Screenshots later. It will take some minutes, but eventually the journalctl displays more errors and a segfault. When that happens, the Qubes gets frozen for a hile, but then resumes. After that I can continue using Qubes normally, until I eventually get tired and close the HVM. When the HVM closes, Qubes crashes.

The drive gives clean SMART info. It passes self tests and I have no problem if I boot into regular Linux and try to do large copies. I have tested attaching the drive to a regular template based VM and doing same copying in that with no issues. I have checked for corruption using diff -r. Please correct if that is not good enough. What I know from the HVMs, even read corruptions have been numerous and easily spotted. One peculiar thing (to me) that I have noticed when copying in a regular VM, the speeds spurt high and then go down all the way to HDD speeds for a long time and then go back up again. All the files should be pretty similar in the copied folders.

I’m pretty sure the setup used to work perfectly fine before in this same slot before I added new graphics card, the AMD one and one new USB card to a remaining PCIe slot. Only one slot remains empty, but it is not accessible. I moved the former number 1 nVidia card to the secondary slot. I cannot rule out Qubes updates, but my hunch is leaning towards the PCIe shuffle as the origin of the problems. But anyone who has done debugging or problem solving knows how much user hunch is worth on things they don’t understand. I hope I’m not misleading other users or future readers.

During research I found that my motherboard has had issues with SMT in Qubes 4.1 version. I have tried changing the BIOS setting from auto to disabled, but that did not change anything apart from slightly cleaner journalctl in dom0. For the record, I have tried smt on and off and all my issues have always been same with either setting. Can’t be sure if disabled in BIOS would have solved something in the past.

I have tried limiting the problem drive to x1 speed in BIOS, but that did not help.

The BIOS version of the motherboard is 3621. There is a newer stable version that I haven’t flashed. I’m always afraid of flashing the BIOS. From my research (reading wikipedia AGESA change log for AM4) I have been doubtful it fixes anything relevant.

I haven’t yet tried updating to 4.3. In case this is fixed in a newer Xen version. Because all the effort it takes to do the work and because I have been scared of breaking a working system or not being able to restore my VMs from backups.

Screenshots from journalctls in case they offer any relevant information:

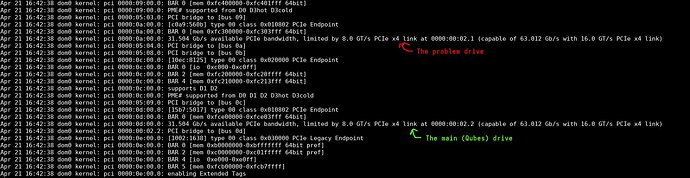

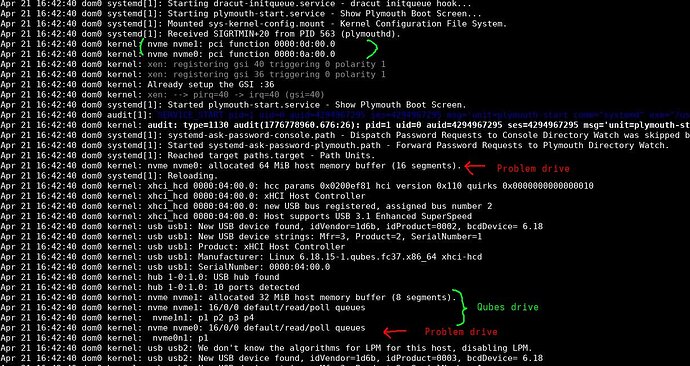

Drives found:

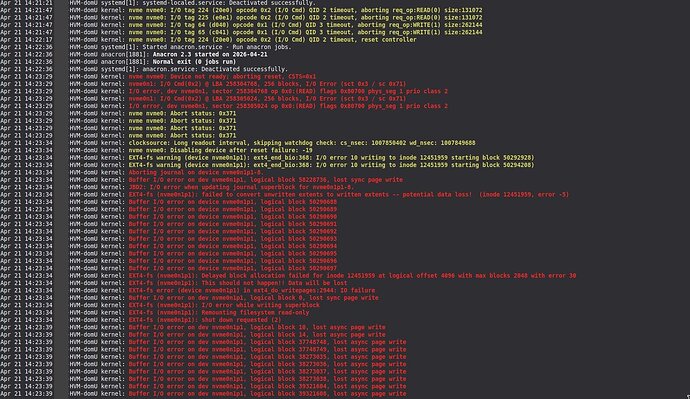

What it says in dom0 about the problem drive when it errors:

How it looks like in the HVM when it happens:

During the spurt of the red lines, Qubes freezes but resumes after and can be used normally.

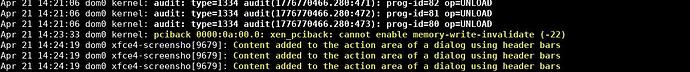

How it looks like in the dom0 when the Qubes crashes when shutting down the HVM.

The disp5955 is unrelated to the problem. The problem starts ate memory-write-invalidate (-22) after which I have continued using Qubes and closing VMs to prepare myself to the eventual crash.

If I try to shutdown Qubes without shutting down the HVM first, I can generate segfault dump to dom0, but I kind of think that is unrelated. It seems like (to someone who is not familiar) the segfault is for logging. Those screenshots were too large for this.

I would have normally just moved to 4.3 before posting here since 4.2.4 is soon gone, but decided to post first because data corruptions can be significant problems to some people. To me, this case is just an inconvenience.